Category: Trends

Rethinking the Professional Services Organization Post-2020 – Constellation

In 2020 professional services organizations (PSOs) are profoundly experiencing at least three different and substantial disruptions to their business, and often several secondary ones as well.

It was the arrival of COVID-19 earlier this year that led to lockdowns around the globe that have curtailed client demand and hampered project delivery. Those same lockdowns have also restricted project staff to their homes for the most part, slowing down client projects and impacting billable work. Finally, a rising economic downturn is wreaking havoc both in client budgets as well as the PSO firms’ own finances, leading to urgent calls for cost cutting and new efficiencies.

It’s the proverbial perfect storm of major challenges and it’s leading to a dramatic impact on customer success as well as affecting morale of the talent base in most PSOs. This has resulted in calls for a widespread push within firms to rapidly rethink and update their operating models to determine the right mitigations and respond effectively.

Except, as many have found, responding quickly and effectively to these challenges is hard to do when so much uncontrolled change is currently taking place.

In fact, many PSOs will be tempted to focus first on cost control to ensure short-term survival. While this is a natural response that does offer immediate and tangible control over a once-in-a-lifetime event filled with uncertainty, organizations must also remain mindful of the vital characteristics of professional services firms. Overly enthusiastic responses to this year’s disruptions can adversely impact the organizations strategic operating model long term.

Sustaining a Bridge to a Better Future

The risk is in affecting the overarching characteristics of professional services firms. In particular, it is two stand-out characteristics that make them unique in the industry: One is the nature of the highly bespoke work they do that is especially tailored for each client regardless of tools, services model or data. The second is the special nature of cultivating successful long-term client relationships. Both of these characteristics require intensive and skilled delivery capabilities. Finally, underpinning both of these foundational characteristics is the high leverage human capital model that determines both revenue and profit for the PSO, and which needs to be finely tuned across the many layers of the organization.

This was a painful lesson learned across the PSO industry from the 2008-2009 financial crisis. Decisions made too expediently negatively impacted firms long after the crisis had passed. Studies have shown that decisions to reduce talent or cut compensation and billable time affected their client relationships as well their brand image for many years. Conversely, the firms that weathered the short-term pressure and managed to keep hard-to-replace human capital prospered as the economy recovered. In this same vein, organizations with a clear sense of the type of PSO that must emerge from the veil of 2020 — and what it will take to thrive in the resulting market conditions — will be in the best position to prosper.

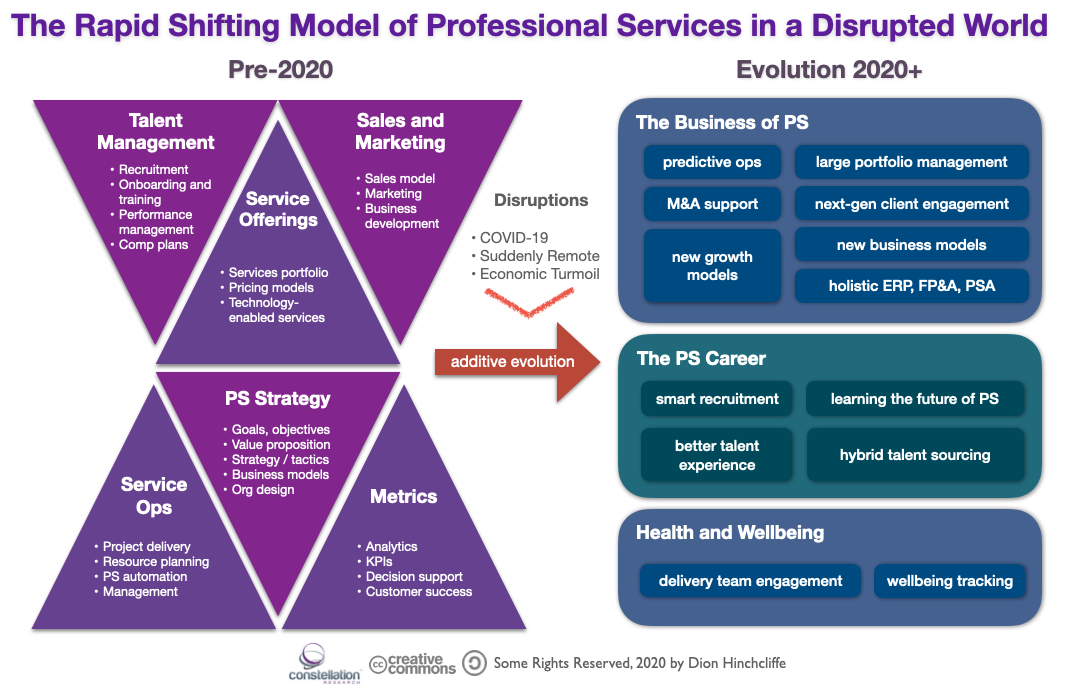

Figure 1: The Pre-2020 Model of PSOs is Giving Way to a New Client/Talent Focused Model

Priorities First: Update the Firm to Reflect New Realities

It’s therefore paramount that any cost control discussions be viewed through the constructive lens of the organization’s business and talent strategy, along with its future operating model. In order to be able to do that, the business must first recalibrate their core strategy and execution with today’s fresh realities in mind:

- Clients are going to be much more selective and demanding about projects going forward

- Deal flows and talent sourcing will be more turbulent until well after the pandemic passes

- Delivery talent will require better enablement and support for their new daily work realities

- Major opportunities now exist in creating a more holistic and dynamic PSO operating model that can cost less and with less talent loss, while actually increasing margin

- Significant new types of business and growth opportunities have come within reach to offset recent revenue impacts

In other words, there are major prospects to do more than just survive through brute force cost cutting. Instead, more elegant solutions afford themselves if first the PSO will engage in a rapid rethinking through a view of the current the art-of-the-possible. In essence, a combined business and digital transformation. This transformation will generally consist of a combination of bold new ideas, better integration and consolidation of operational activities, and powerful new technology tools including automation, holistic user experience upgrades, and powerful new concepts from the realm of digital business.

The Evolution of the PSO Through Proactive, Targeted Transformation

As it turns out, the typical PSO has been experiencing quite a bit of change in the last couple of years anyway. Trends like more dynamic staffing approaches, better automation of delivery, more project analytics and diagnostics, have all led to improved services, higher margins, and greater customer success. Often led initially by technology, which has led to simultaneous advances in re-imagining the operational models of PSOs through new capabilities, a new type of PSO is emerging that is more agile, lean, digitally-infused, and experience-centric.

Driven by industry trends, technology innovations, and changes in the world, below are the types of key shifts that are being seen in PSO organizations as a result of the events in 2020. These trends are grouped into three categories, focusing on the business/clients, the worker, and overall health and wellbeing of all PSO stakeholders.

The Business of PS: Trends

- Predictive operations. Projects are becoming instrumented well enough today while sufficient historical project baseline data is now available to routinely predict risks and anticipating opportunities before they actually happen. Using these insights can lead to significant cost savings, higher success rates, and quality improvements.

- M&A support. A wave of mergers and acquisitions will inevitably occur out of the events of 2020, particularly of smaller PS firms. PSOs that have sophisticated infrastructure and processes for managing the financial, operational, and structural merging of clients will have an advantage.

- Large portfolio management. Most PSO are not taking advantage of the ability to manage large portfolios of projects across a client to maximize talent reuse, achieve economies of scale, and improve delivery.

- Next-generation client engagement. As the industry becomes hypercompetitive, the time is right for a more engaging, sustained, informative, and transparent connection to the client using a combination of technology, user experience, and real-time data flows. This higher quality delivery approach will result in increase of project share within clients against other PSO firms.

- New growth models. Most PSOs have readily accessible untapped growth opportunities which they can add to their existing portfolios to increase sales.

- New business models. The time is ripe for PSOs to lateral over into adjacent business models such as subscriptions, IP licensing, strategic data services, and annual recurring revenue that can provide vital new green fields for resilience and expansion

The PS Career: Trends

- Smart recruitment. New models exist for recruiting and project matching via AI, while talent screening and the pre/onboarding process can be made more intelligent and automated. This will drive bottom-line business benefits while also increasing acquisition and retention.

- Better talent experience. The top workers will have expectations of a general return to the quality of work life they had prior to 2020. PSOs that proactively deliver on this in remote work scenarios while also uplevelling the overall worker experience will have significant retention benefits.

- Learning the future of PS. The existential changes and new opportunities in the PSO world must be better communicated to workers, so they can help realize as well as reap the benefits enumerated here.

- Hybrid talent sourcing. New dynamic staffing models — aka the Gig Economy for professional services — will mix with full-time employment to create much stronger teams that are also more cost contoured while attracting new types of diverse talent.

Health and Wellbeing: Trends

- Delivery team engagement. Creating enabling and more connected working environments in the new remote work situation particularly for delivery teams is essential to preserve a connection to the “mothership” while also nurturing workers through the tough and challenging times.

- Wellbeing tracking. Tools and processes that track the physical, mental, and psychological health of PSO stakeholders, from project staff, back office, and clients — and provide appropriate assistance when needed — will be increasingly expected and has already become a hallmark of best-in-class employers.

In summary, PSOs have a historic opportunity to pivot to adapt to the significant disruptions they have faced so far in 2020. By adopting an updated operating model and quickly delivering on it with clients and talent using new solutions, PSOs can avoid the most damaging types of cost cutting while being positioned for growth in 2021 and beyond. That is, as long as they are willing to think outside the box and adopt sensible yet far-reaching shifts in their strategies, tools, and operating models.

Source : https://www.constellationr.com/blog-news/rethinking-professional-services-organization-post-2020

Here Are the Top Five Questions CEOs Ask About AI – CIO

Recently in a risk management meeting, I watched a data scientist explain to a group of executives why convolutional neural networks were the algorithm of choice to help discover fraudulent transactions. The executives—all of whom agreed that the company needed to invest in artificial intelligence—seemed baffled by the need for so much detail. “How will we know if it’s working?” asked a senior director to the visible relief of his colleagues.

Although they believe AI’s value, many executives are still wondering about its adoption. The following five questions are boardroom staples:

1. “What’s the reporting structure for an AI team?”

Organizational issues are never far from the minds of executives looking to accelerate efficiencies and drive growth. And, while this question isn’t new, the answer might be.

Captivated by the idea of data scientists analyzing potentially competitively-differentiating data, managers often advocate formalizing a data science team as a corporate service. Others assume that AI will fall within an existing analytics or data center-of-excellence (COE).

AI positioning depends on incumbent practices. A retailer’s customer service department designated a group of AI experts to develop “follow the sun chatbots” that would serve the retailer’s increasingly global customer base. Conversely a regional bank considered AI more of an enterprise service, centralizing statisticians and machine learning developers into a separate team reporting to the CIO.

These decisions were vastly different, but they were both the right ones for their respective companies.

Considerations:

- How unique (e.g., competitively differentiating) is the expected outcome? If the proposed AI effort is seen as strategic, it might be better to create team of subject matter experts and developers with its own budget, headcount, and skills so as not distract from or siphon resources from existing projects.

- To what extent are internal skills available? If data scientists and AI developers are already clustered within a COE, it might be better to leave the team as-is, hiring additional experts as demand grows.

- How important will it be to package and brand the results of an AI effort? If AI outcome is a new product or service, it might be better to create a dedicated team that can deliver the product and assume maintenance and enhancement duties as it continues to innovate.

2. “Should we launch our AI effort using some sort of solution, or will coding from scratch distinguish our offering?”

When people hear the term AI they conjure thoughts of smart Menlo Park hipsters stationed at standing desks wearing ear buds in their pierced ears and writing custom code late into the night. Indeed, some version of this scenario is how AI has taken shape in many companies.

Executives tend to romanticize AI development as an intense, heads-down enterprise, forgetting that development planning, market research, data knowledge, and training should also be part of the mix. Coding from scratch might actually prolong AI delivery, especially with the emerging crop of developer toolkits (Amazon Sagemaker and Google Cloud AI are two) that bundle open source routines, APIs, and notebooks into packaged frameworks.

These packages can accelerate productivity, carving weeks or even months off development schedules. Or they can exacerbate collaboration efforts.

Considerations:

- Is time-to-delivery a success metric? In other words, is there lower tolerance for research or so-called “skunkworks” projects where timeframes and outcomes could be vague?

- Is there a discrete budget for an AI project? This could make it easier to procure developer SDKs or other productivity tools.

- How much research will developer toolboxes require? Depending on your company’s level of skill, in the time it takes to research, obtain approval for, procure, and learn an AI developer toolkit your team could have delivered important new functionality.

3. “Do we need a business case for AI?”

It’s all about perspective. AI might be positioned as edgy and disruptive with its own internal brand, signaling a fresh commitment to innovation. Or it could represent the evolution of analytics, the inevitable culmination of past efforts that laid the groundwork for AI.

I’ve noticed that AI projects are considered successful when they are deployed incrementally, when they further an agreed-upon goal, when they deliver something the competition hasn’t done yet, and when they support existing cultural norms.

Considerations:

- Do other strategic projects require business cases? If they do, decide whether you want AI to be part of the standard cadre of successful strategic initiatives, or to stand on its own.

- Are business cases generally required for capital expenditures? If so, would bucking the norm make you an innovative disruptor, or an obstinate rule-breaker?

- How formal is the initiative approval process? The absence of a business case might signal a lack of rigor, jeopardizing funding.

- What will be sacrificed if you don’t build a business case? Budget? Headcount? Visibility? Prestige?

4. “We’ve had an executive sponsor for nearly every high-profile project. What about AI?”

Incumbent norms once again matter here. But when it comes to AI the level of disruption is often directly proportional to the need for a sponsor.

A senior AI specialist at a health care network decided to take the time to discuss possible AI use cases (medication compliance, readmission reduction, and deep learning diagnostics) with executives “so that they’d know what they’d be in for.” More importantly she knew that the executives who expressed the most interest in the candidate AI undertakings would be the likeliest to promote her new project. “This is a company where you absolutely need someone powerful in your corner,” she explained.

Considerations:

- Does the company’s funding model require an executive sponsor? Challenging that rule might cost you time, not to mention allies.

- Have high-impact projects with no executive sponsor failed? You might not want your AI project to be the first.

- Is the proposed AI effort specific to a line of business? In this case enlisting an executive sponsor familiar with the business problem AI is slated to solve can be an effective insurance policy.

5. “What practical advice do you have for teams just getting started?”

If you’re new to AI you’ll need to be careful about departing from norms, since this might attract undue attention and distract from promising outcomes. Remember Peter Drucker’s quote about culture eating strategy for breakfast? Going rogue is risky.

On the other hand, positioning AI as disruptive and evolutionary can do wonders for both the external brand as well as internal employee morale, assuring constituents that the company is committed to innovation, and considers emerging tech to be strategic.

Either way, the most important success measures for AI are setting accurate expectations, sharing them often, and addressing questions and concerns without delay.

Considerations:

- Distribute a high-level delivery schedule. An unbounded research project is not enough. Be sure you’re building something—AI experts agree that execution matters—and be clear about the delivery plan.

- Help colleagues envision the benefits. Does AI promise first mover advantage? Significant cost reductions? Brand awareness?

- Explain enough to color in the goal. Building a convolutional neural network to diagnose skin lesions via image scans is a world away from using unsupervised learning to discover unanticipated correlations between customer segments. As one of my clients says, “Don’t let the vague in.”

These days AI has mojo. Companies are getting serious about it in a way they haven’t been before. And the more your executives understand about how it will be deployed—and why—the better the chances for delivering ongoing value.

Source : https://www.cio.com/article/3318639/artificial-intelligence/5-questions-ceos-are-asking-about-ai.html

Recent Comments